Agentic AI Workflows: What They Are, How They Work, and When They Actually Make Sense

Co-Founder and CTO

Tags

Share

If your “agent” just takes a prompt and gives you an answer, that’s not a workflow. That’s a request-response loop.

Agentic AI workflows are different.

They’re not about generating text. They’re about executing toward a goal. That means planning, acting, checking results, and iterating. Sometimes across multiple systems. Sometimes across multiple agents.

There’s a lot of noise right now around AI agents, multi-agent systems, and autonomous AI workflows. Some of it is useful. Some of it is just rebranded automation.

This article breaks down what Agentic AI workflows actually are, how they differ from traditional AI pipelines, and how they show up in real production systems.

Let’s start with the definition.

What are Agentic AI workflows?

Agentic AI workflows are goal-driven execution flows powered by AI agents that can reason, plan, take actions using tools, evaluate outcomes, and iterate until a defined objective is met.

The key words there are goal-driven and execution.

Traditional AI systems respond to inputs. Agentic AI systems pursue outcomes.

In an agentic workflow, the system does not just answer a question. It can break a goal into subtasks, choose which tools to use, call APIs or external systems, evaluate intermediate results, retry or adjust based on feedback, and escalate when confidence is low.

This is why people often describe Agentic AI systems as moving from chat to action.

The workflow becomes the product.

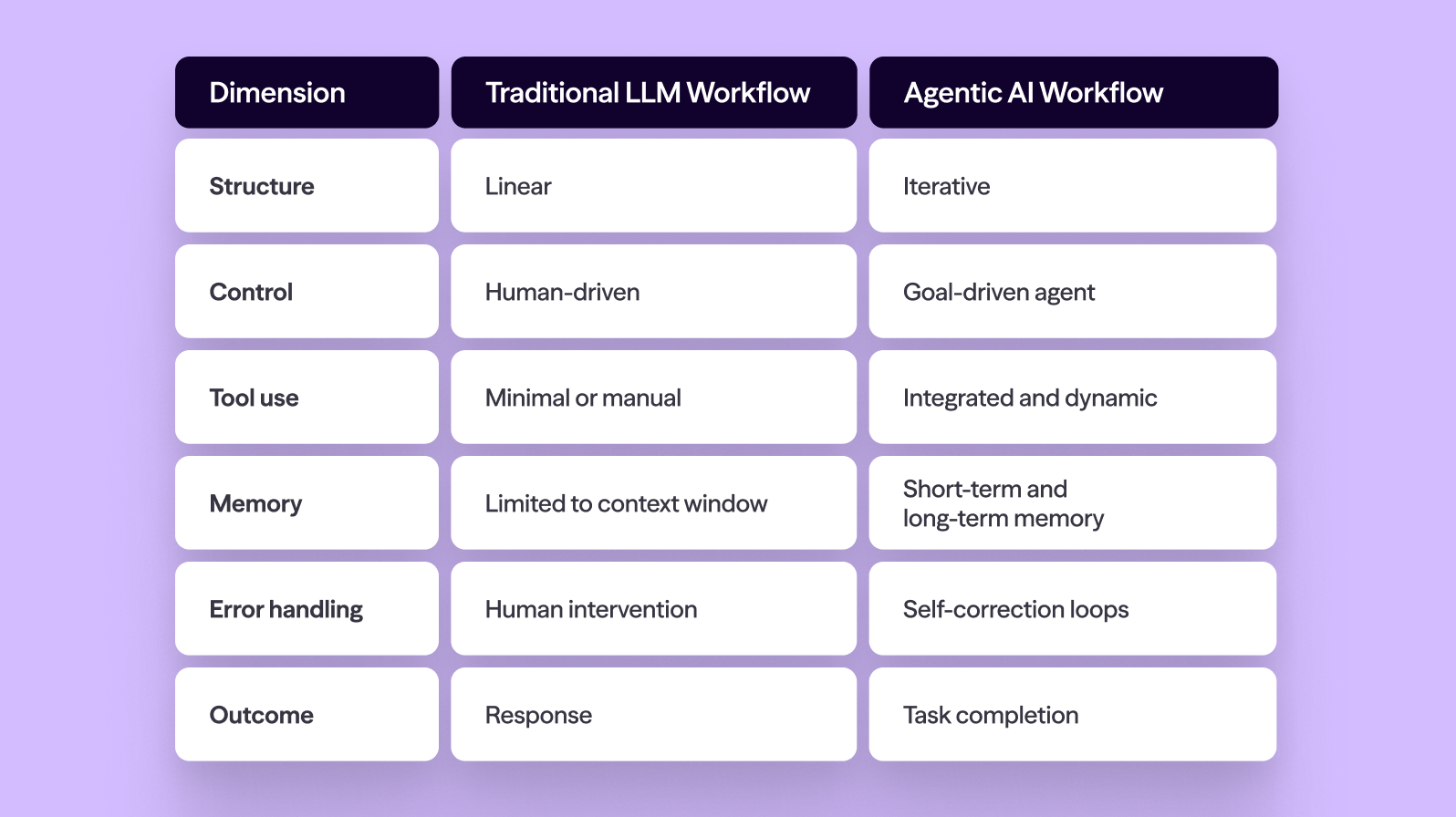

How Agentic AI workflows differ from traditional AI pipelines

Before you build one of these, it’s worth understanding what’s actually new here.

Traditional LLM workflows

Most early LLM orchestration looked like this:

Prompt comes in

LLM generates a response

Human reviews or takes action

Even with added prompt chains or retrieval-augmented generation, the structure is usually linear.

Prompt to model to output.

The human is the orchestrator.

If something goes wrong, the human fixes it. If more steps are needed, the human triggers them.

These pipelines are useful. They improve productivity. But they are not Agentic AI workflows.

Agentic AI workflows

In agentic workflows, the system owns progression toward a goal.

The flow looks more like this:

Goal defined

Agent generates a plan

Agent selects tools

Agent executes tasks

Agent evaluates results

Agent iterates or terminates

You now have planning, decision-making, tool use, memory, and feedback loops.

The orchestration shifts from human-driven to goal-driven.

Here’s a simple comparison:

That shift is why Agentic AI workflows are attracting attention from engineers and CTOs. They represent a move from assistance to execution.

Core components of an Agentic AI workflow

If you strip away the marketing language, most Agentic AI systems share the same core building blocks.

AI agent (reasoning core)

At the center is an LLM-powered reasoning engine. This is the brain that interprets goals, decomposes tasks, and decides next steps.

This is not just text generation. It’s structured decision-making.

Goals and constraints

Agentic workflows require explicit goals.

“Answer the customer” is not a goal.

“Resolve the issue and close the case within policy constraints” is closer.

Constraints matter just as much. Permissions, rate limits, brand tone rules, compliance policies. These define what the agent is allowed to do.

Without constraints, autonomy becomes risk.

Planning and task decomposition

Planning is what separates Agentic AI workflows from simple AI workflow automation.

Given a goal, the agent must decide what sub-tasks are required, what order they should run in, and what dependencies exist.

This planning phase may be explicit or implicit, but it’s there.

Tool use and action execution

AI agents become useful when they can interact with external systems.

That means calling APIs, querying databases, updating CRM records, sending messages, creating tickets, or running code.

Tool invocation is where AI agent architecture becomes critical. Permissions, authentication, and logging need to be production-grade.

Memory

There are typically two types.

Short-term memory is context within a workflow session.

Long-term memory is stored history across sessions.

Memory enables continuity. Without it, every execution is stateless.

Feedback, evaluation, and self-correction

Agentic workflows include evaluation loops.

After executing a step, the agent assesses whether the action moved closer to the goal, whether the result was valid, whether it should retry, and whether escalation is needed.

This is where reliability either improves or collapses.

How Agentic AI workflows actually work step by step

Let’s walk through a concrete example.

Imagine a goal: research a prospective customer and draft a personalized outreach email.

Goal intake: The system receives a structured goal definition.

Plan generation: The agent breaks the goal into subtasks such as researching the company, identifying decision-makers, summarizing relevant context, and drafting a personalized message.

Tool selection: The agent chooses tools such as web search APIs, LinkedIn data APIs, internal CRM systems, and drafting models.

Execution: The agent queries tools, retrieves data, composes summaries, and drafts a message.

Evaluation: The agent evaluates alignment with brand tone, grounding in actual data, and confidence thresholds.

Iteration or termination: If confidence is low, the agent refines. If sufficient, it submits for review or sends based on policy.

That entire flow is an Agentic AI workflow.

Notice what’s missing. There is no human manually triggering each step.

Real-world use cases for Agentic AI workflows

Agentic AI workflows are most useful when tasks are multi-step and tool-heavy.

Customer support automation can include classifying issues, retrieving order history, checking policy eligibility, generating responses, updating ticket status, and escalating when required.

Sales research and outreach agents can monitor inbound signals, research prospects, generate tailored outreach, log activity in CRM systems, and schedule follow-ups.

Software engineering copilots can interpret feature requests, search codebases, propose implementation plans, write code, run tests, and iterate on failures.

Data analysis agents can interpret business questions, query databases, run transformations, generate charts, validate anomalies, and produce reports.

Internal IT agents can triage tickets, provision resources, reset access, and document actions.

The pattern is consistent. Multi-step execution. Tool usage. Feedback loops.

Common Agentic AI workflow patterns

Not all Agentic AI systems are structured the same way.

Single-agent with tools is the simplest architecture. One reasoning agent with multiple tool integrations and a clear goal.

Multi-agent systems divide responsibilities across agents. For example, a planner agent, research agent, executor agent, and reviewer agent. This increases modularity but also complexity.

Supervisor and worker models introduce a high-level agent that assigns tasks to specialized worker agents.

Human-in-the-loop workflows allow agents to execute steps but pause for approval at defined checkpoints. This is often the most practical enterprise model.

Challenges and risks of Agentic AI workflows

This is where production reality matters.

Reliability and hallucinations remain issues. Agents can reason incorrectly, call the wrong tool, or misinterpret results. Monitoring and evaluation frameworks are essential.

Tool misuse and infinite loops are real risks. Agents can retry excessively or cascade errors. Termination conditions and rate limits are required.

Cost and latency increase as workflows require multiple model calls and API requests.

Observability and debugging are non-trivial. Structured logs, action traces, decision records, and evaluation metrics are necessary.

Governance and safety are mandatory. Agents that can act must operate within strict permission models and produce auditable logs.

When to use Agentic AI workflows and when not to

Agentic AI workflows are a good fit for multi-step processes, tool-heavy workflows, clear goals, and high coordination overhead.

They are overkill for simple question-answer tasks, single-step transformations, ambiguous goals, or emotionally sensitive interactions that require human nuance.

If a deterministic script can solve the problem reliably, you may not need an agent.

Autonomy should earn its place.

Tools and frameworks commonly used

Many frameworks now support Agentic AI workflow development.

Teams commonly use agent frameworks for reasoning loops, orchestration layers for task coordination, tool abstraction layers, evaluation and monitoring systems, and guardrail or policy engines.

The framework matters less than architectural discipline.

Production Agentic AI systems require strong permission models, observability, cost controls, and clear ownership.

This is infrastructure, not experimentation.

The future of Agentic AI workflows

We are moving from prompt engineering to system engineering.

Early AI adoption improved individual tasks. The next phase focuses on autonomous AI workflows that operate reliably within constraints.

Enterprise adoption will likely follow a pattern: assistive agents, human-in-the-loop execution, controlled autonomy, then expanded multi-agent systems.

The emphasis will shift toward guardrails, observability, and reliability.

The companies that succeed will not be the ones with the most agents. They will be the ones with disciplined orchestration and production-grade systems.

Agentic AI workflows are powerful. But power without structure creates chaos.

Execution, not demos, is what matters.

Ready to move from prompts to production workflows?

See how Dialpad helps teams design, orchestrate, and operate reliable Agentic AI systems in real-world environments.